Key Takeaways for Employers

- Traditional pyramid models are shifting toward diamond-shaped organizations, where entry-level roles shrink and mid-level expertise expands.

- As automation handles routine tasks, demand is growing for professionals who can apply judgment, oversee AI outputs, and orchestrate human–AI workflows.

- Cost-driven AI adoption can erode morale, weaken leadership pipelines, reduce diverse perspectives, and create accountability gaps.

- While most professionals want employer-supported AI development, only a minority report receiving formal training — creating a readiness gap.

- Eliminating foundational roles too quickly risks hollowing out institutional knowledge and long-term succession planning.

- Organizations that intentionally balance automation with reskilling, redeployment, and human oversight will build more resilient, future-ready teams.

Reading time: 7 minutes

As artificial intelligence reshapes how work gets done across Canada, the 2026 Un-Salary Guide has made one thing clear: employers and workers are navigating a moment of profound structural change. AI adoption is accelerating, yet trust, readiness and capability vary widely across the workforce. These shifts are influencing everything from role design to career pathways, and they are raising urgent questions about how organizations build, grow and retain talent in an AI-enabled economy.

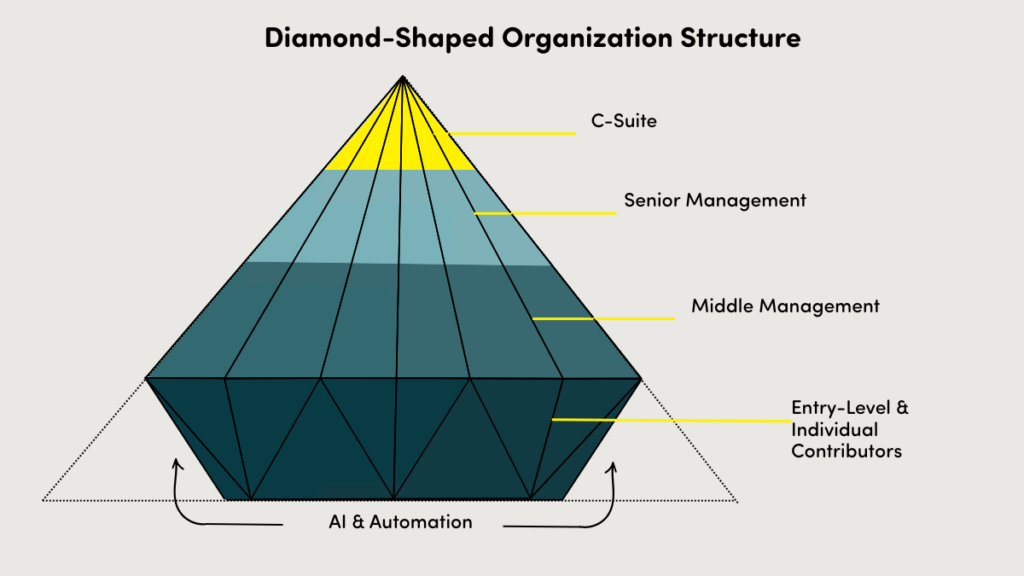

Against this backdrop, organizations are beginning to move away from traditional pyramid structures toward what experts call diamond-shaped organizations. These models emerge when automation reduces the

number of entry-level roles and expands the mid-layer where professional judgment, analysis and human-AI orchestration occur.

At our recent panel event, The Future of Work: AI, Trust, and Upskilling in the Workforce, KPMG Partner, People & Change Leader Shesta Babar explained what this shift means for Canadian employers and why strategies driven solely by short-term cost savings risk weakening leadership pipelines, eroding morale and narrowing the diversity of thought organizations rely on to innovate.

In this follow-up conversation, Shesta discusses the rise of the diamond model, the risks of hollowing out entry-level roles, and how organizations can balance

automation with human capability while protecting leadership pipelines, innovation, and long-term organizational health.

From Pyramid to Diamond Model

For decades, most large Canadian and North American organizations have been structured like a pyramid: a broad base of individual contributors,

several layers of management, and a narrow top of senior leadership. But that shape is changing.

What is the Diamond Model?

The “diamond model” reflects a fundamental shift in organizational design. Traditionally, the bottom layer of the pyramid was filled with entry-level employees performing manual or routine tasks. As you moved upward, responsibilities expanded—team leadership, portfolio management, strategic oversight.

Now, imagine trimming the bottom corners of that pyramid. What remains is a diamond. Why? Because automation and AI are reducing the need for large

numbers of individual contributors at the base. Entire processes are being automated, particularly in professional services firms and organizations that

invested early in shared services or AI-centric workflows. Agilus’ AI Readiness and Work in Canada echoed this shift. Even as employers themselves report conflicting priorities for AI: productivity, innovation, employee experience, and cost savings, there was no single dominant direction leading strategy.

Yet humans are far from obsolete. AI still requires oversight, judgment, and quality control. These tasks are increasingly concentrated in the expanded midlayer of the diamond. That’s why the diamond’s widest point is now the mid-level: the zone where human-AI orchestration happens.

The Risks of Over-Automation: What Leaders Need to Know

AI adoption is accelerating, but not without unintended consequences. A recent KPMG global study found Canadians remain cautious; scoring below the global

average on the AI trust index. Interestingly, Agilus’ AI Readiness in the Canadian Workforce Survey 2026 found that employed professionals are more likely to

trust and use AI than unemployed job seekers, underscoring the fear factor when technology feels like a threat. In fact, the majority of employed respondents say they feel confident using AI tools, compared to only ~35% of unemployed respondents. This gap is worsened by limited access to training: only about 30% of workers report receiving any AI training from their employer, even though more than 70% say they want employer-supported development.

When organizations adopt AI primarily for short-term cost savings, they risk creating problems that are far harder to fix later. We asked Shesta about the biggest risks of over-automation.

Here is what Shesta sees as the biggest risks:

- Declining Employee Morale

“Fear erodes performance,” Shesta explains. “When employees believe their jobs may disappear, engagement drops and innovation suffers. Psychological safety is essential for high-performing cultures.” - Loss of Institutional Knowledge

Entry-level roles are where future leaders learn the fundamentals. “I’ve been at KPMG for 16 years,” she says. “That foundational experience is what enables me to lead effectively today.” Automate too much too quickly, and you eliminate the future pool of people who understand critical processes. - Fragile Career Pathways

A strong leadership pipeline depends on developing well-rounded leaders who have grown through the organization and understand how the business operates at different levels and across functions. When early-career roles are eliminated, employees lose the chance to build that breadth of experience. “When individuals aren’t given opportunities to

progress through the business, companies ultimately weaken the leadership cohort,” Shesta cautions. “Organizations need leaders who have lived the work, not just overseen it.” - Loss of Diverse Perspectives

AI learns from patterns in data; not lived experience. “Over-reliance on automation risks homogenized thinking,” she adds. “Humans bring different viewpoints and ways of solving problems.” - Accountability Gaps

As organizations introduce more AI-driven decision-making, questions inevitably arise about who is ultimately responsible for those decisions. “Accountability still sits squarely with the Board and the Executive Leadership Team,” Shesta emphasizes. “What changes is the complexity of governance.” As AI becomes more embedded in core processes, leaders must ensure they fully understand how decisions are being made and put the right oversight structures in place. - Erosion of Skill Development

When AI completes end-to-end processes, employees lose opportunities to build foundational skills. “Organizations must embed continuous learning

into AI-enabled workflows,” she says. “People need to understand what is automated and why and how.” That means understanding the full end-to-end workflow and being deliberate about which tasks remain human-led, and which are AI-driven. Creating this balance requires strategy and intentional execution, so employees continue to learn, contribute, and grow alongside technology.

These risks underscore a critical truth: AI adoption isn’t just about technology; it’s about people. Organizations that focus solely on cost-cutting may find themselves with weakened leadership pipelines, eroded morale, and diminished diversity of thought. So, how can leaders embrace automation without

sacrificing human capability?

Balancing Automation With Human Capability

Eliminating entry-level and back-office roles may look like a quick win for cost savings; however, it comes at a price. These positions are the foundation for future leaders. They’re where employees learn your culture, understand processes, and build the institutional knowledge that makes them effective managers later. Remove them, and today’s savings could become tomorrow’s leadership gap. Shesta’s advice: “You need to slow down to speed up.”

- Get clear on your “why.”

If AI adoption is driven purely by next-quarter cost savings, decisions will be short-sighted. If the goal is long-term innovation and impact, strategies must be designed differently. - Understand Roles Before Eliminating Them

Before leaders cut jobs, they must dig into what those roles actually do. What requires human judgment? What could be automated? And most importantly, can these people be reskilled or redeployed? “It’s easy to cut jobs”, Shesta says. “It’s harder, but far wiser to ask, ‘Can we repurpose institutional knowledge?” - Prioritize Redeployment, Not Just Reduction

Not every role can be saved, but redeployment should always be considered. Look for transferable skills and create alternative pathways into leadership, not just traditional ladders. - Orchestrate Humans + AI, Not Humans vs. AI

“AI shouldn’t replace humans; it should complement them,” Shesta emphasizes. Leaders must design intentional interplay between people and technology. Think of your organization as two layers: a people layer and an AI layer. Leaders must intentionally design how these layers interact. That requires strategy, agility, and thoughtful execution.

The Bottom Line

AI is not just a technology decision; it’s a people decision. The diamond model signals a future where mid-level expertise becomes critical, but entry-level

pathways risk disappearing. If organizations automate without a plan for reskilling, redeployment, and knowledge transfer, they jeopardize morale, diversity, and leadership pipelines. The challenge isn’t choosing between humans and AI. It’s designing a workforce where both thrive. Those who get this balance right will not only drive efficiency but also build resilient, future-ready organizations.